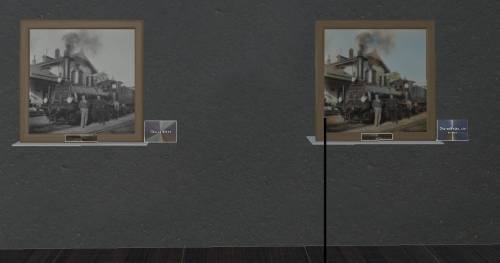

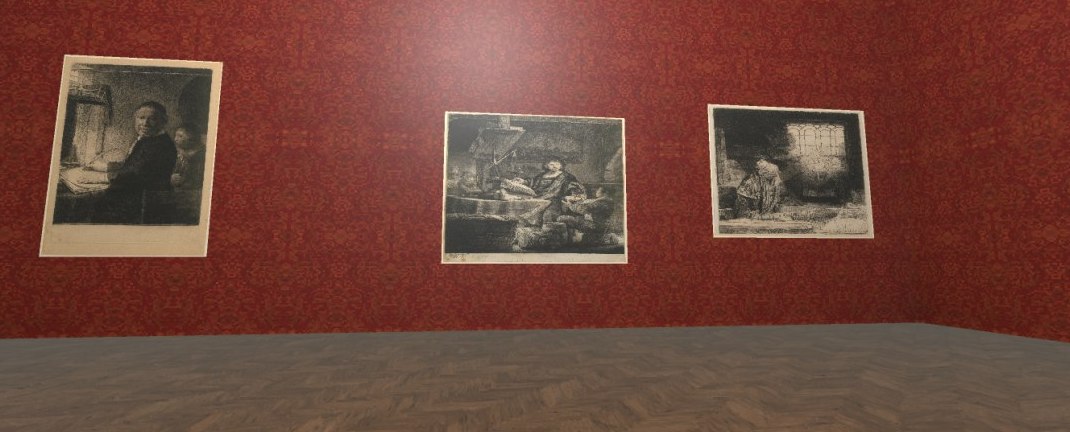

The project idea is to create a voice assistance with the identity of an artist. In our case, we created a demo of the famous Swiss painter Ferdinand Hodler. That is to say, the voice assistance is nor Siri nor Alexa. Instead, it is an avatar of Ferdinand Hodler who can answer your questions about his art and his life.

You can directly interact with the program by talking, as what you would do normally in your daily life. You can ask it all kinds of questions about Ferdinand Hodler, e.g.:

-

When did you start painting?

-

Who taught you painting?

-

Can you show me some of your paintings?

-

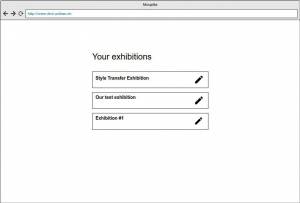

Where can I find an exhibition with your artworks?

By talking to the digital image of the artist directly, we aim to bring the art closer to people's daily life, in a direct, intuitive and hopefully interesting way.

As you know, museum audiences need to keep quiet which is not so friendly to children. Also, for people with special needs, like the visually dispaired, and people without professional knowledge about art, it is not easy for them to enjoy the museum visit. To make art accessible to more people, a voice assistance can help with solving those barriers.

If you asked the difference between our product with Amazon's Alexa or Apple's Siri, there are two major points:

-

The user can interact with the artist in a direct way: talking to each other. In other applications, the communication happened by Alexa or Siri to deliver the message as the 3rd party channel. In our case, users can have immersive and better user experienceand they will feel like if they were talking to an artist friend, not an application.

-

The other difference is that the answers to the questions are preset. The essence of how Alexa or Siri works is that they search the question asked by users online and read the returned search results out. In that case, we cannot make sure that the answer is correct and/or suitable. However, in our case, all the answers are coming from reliable data sets of museum and other research institutions, and have been verified and proofread by the art experts. Thus, we can proudly say, the answers from us are reliable and correct. People can use it as a tool to educate children or as visiting assistance in the exhibition.

Data

-

List and link your actual and ideal data sources.

-

Kunsthaus Zürich

⭐️ List of all Exhibitions at Kunsthaus Zürich

-

SIK-ISEA

⭐️ Artist data from the SIKART Lexicon on art in Switzerland

-

Swiss National Museum

⭐️ Representative sample from the Paintings & Sculptures Collection (images and metadata)

-

Wikimedia Switzerland

Team

-

Angelica

-

Barbara

-

Anlin (lianganlin@foxmail.com)

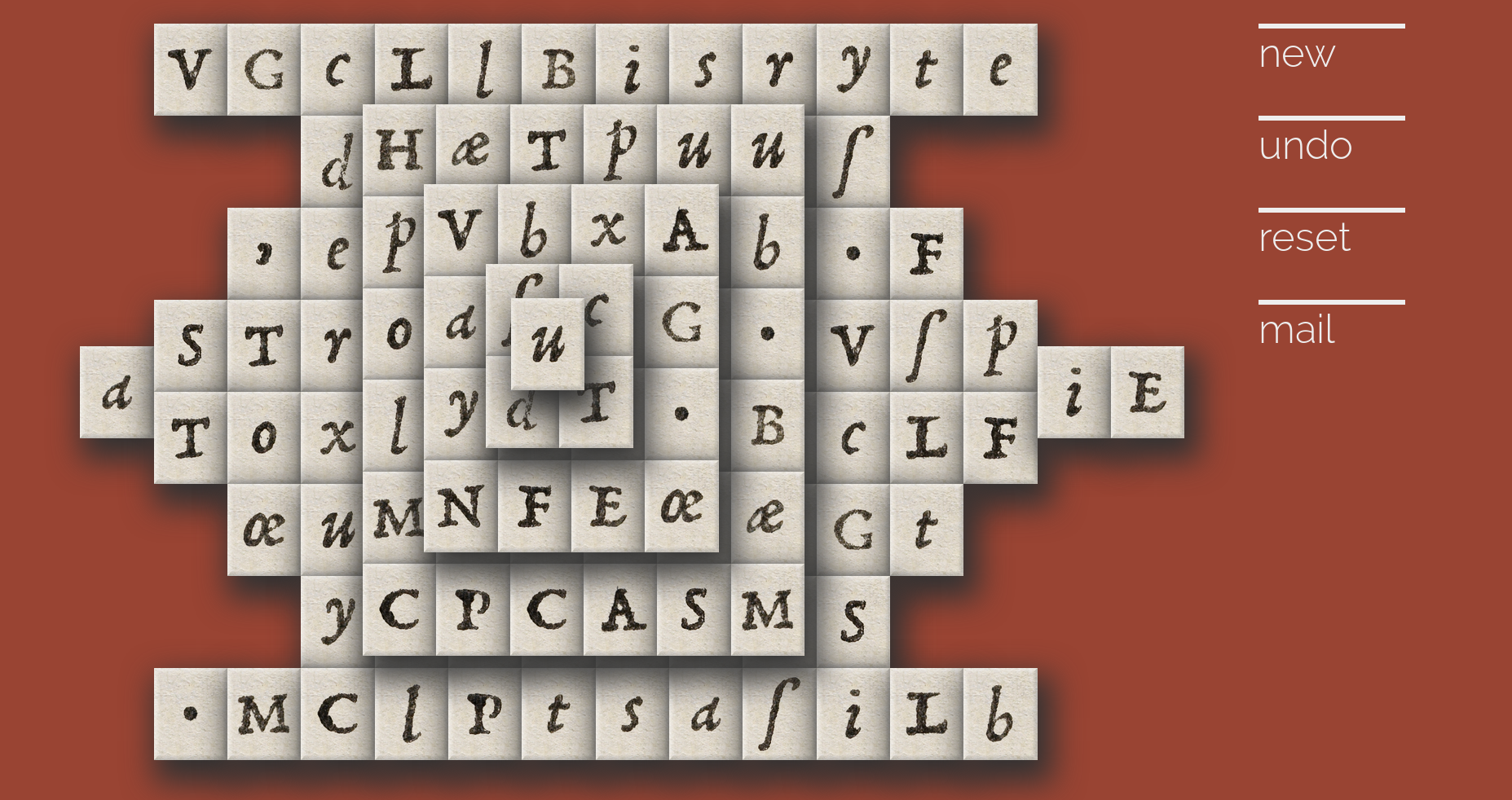

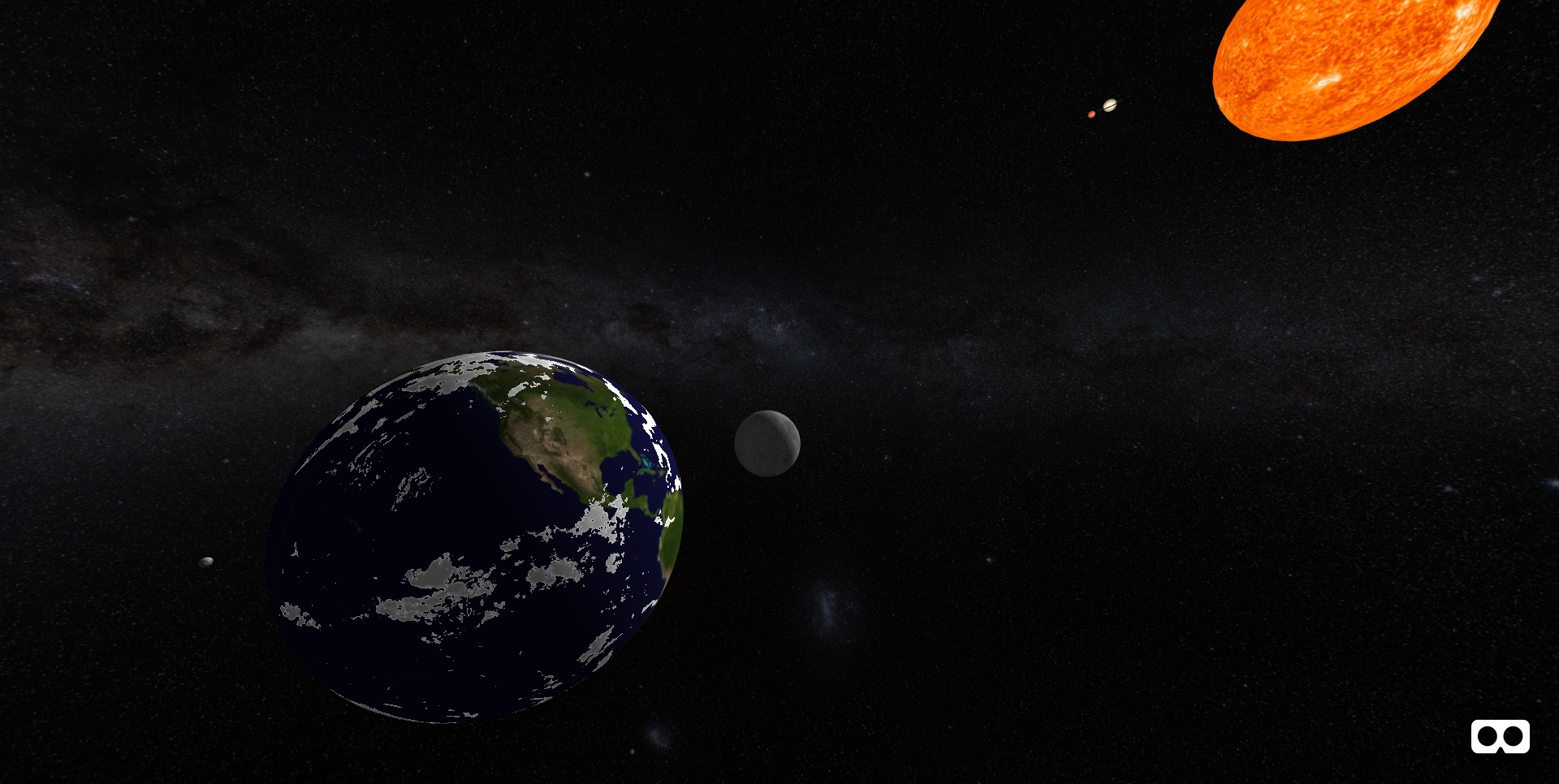

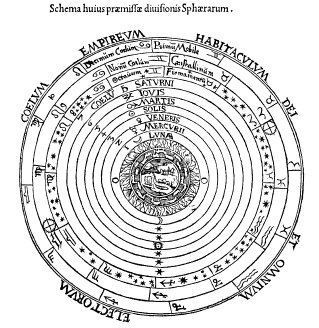

This is how to fly Ptolemy's virtual spaceship:

This is how to fly Ptolemy's virtual spaceship: